The following essay is about modern macroeconomic theory. The field of macroeconomics has gotten a lot of beating in the aftermath of the Global Financial Crisis. The Queen of England famously asked the profession: "Why did nobody see it coming?" While it is not entirely clear whether macroeconomic theory can or ever will be able to predict financial and economic crises in advance, there is no doubt that the field as a whole was not well prepared for the events that occurred after 2008. While many Keynesian economists vehemently argued for more aggressive monetary and fiscal stimuli programs, other colleagues in the field actually questioned the efficacy and desirability of such policy interventions (Krugman, 2009). Part of the problem was that the entire profession took a wrong turn in early 1980s and never recovered from the mistakes that were made back then. In what follows, I borrow heavily from Roger Farmer's intriguing book "Prosperity for all" in which he criticizes the field and argues for a paradigm shift in macroeconomic theory (Farmer, 2016).

Lakatos et al. (1980) define a degenerative research program as one that does not allow for the refutation of wrong hypotheses. Instead of overturning the wrong foundations, more and more complexity is added in order to make the models fit the data. A paradigm shift a la Kuhn (1957) or even complete refutation a la Popper (2009) does not take place. The "rotten core" is maintained by the leading experts in the field who have an incentive to protect their legacy. Instead of throwing out the flawed basis altogether, more and more ad-hoc assumptions and minor tweaks are added to the models in order to make them a better fit. Farmer (2016) argues that this is precisely what happened in the field of macroeconomics over the last decades.

Macroeconomics, being the study of the economy as a whole, concerns itself with the aggregate behavior and interaction of millions of consumers and firms. The macroeconomic Keynesian models of the postwar period were still relatively simplistic. While being able to describe macroeconomic aggregates, they more or less completely disregarded microeconomic behavior, the actions and incentives of individual agents in the economy.

This changed in the early 1980s with the emergence of Real business-cycle (RBC) theory. These models also incorporated the microeconomic behavior of individuals and firms in the economy. Some of the main assumptions are that individuals are completely rational and maximize their utility. Firms are profit maximizers. Markets are perfectly competitive and clear at all times, even the labor market, thus ruling out the existence of involuntary unemployment altogether. Prices and wages are flexible and there is one unique macroeconomic equilibrium (Summers, 2002).

While the models are mathematically consistent, the assumptions are so far-fetched from reality that they do not square in any way with the data. RBC theory suggests that recessions are driven by technology shocks, a claim that cannot be supported by evidence (Summers, 2002). Furthermore, monetary shocks are irrelevant. Again, more than a century of data actually suggests that monetary shocks are highly important and probably the main driver of most economic downturns in advanced economies (Romer and Romer, 2004; Friedman and Schwartz, 1963). After all, Rudiger Dornbusch famously said: "Expansions don't die of old age. Every single one of them was murdered by the Fed."

Finally, RBC theory would have us believe that the Great Depression with more than 25% unemployment in the U.S. was actually the result of a mass decision to take a long vacation. Nevertheless, the theory was influential enough to be awarded several Nobel prizes in Economic Sciences (Krugman, 2009).

While the real breakthrough was that RBC models incorporated microeconomic behavior for the first time, flawed assumptions actually led to the fact that these models were much less realistic than older Keynesian models, which only described macroeconomic aggregates without being overly concerned with microeconomic optimization problems.

Over the last decades, many macroeconomists have embarked on a new research agenda called neo-keynesian economics. One should note, however, that neo-keynesian economics is very far apart from the macroeconomics that Keynes originally suggested in his "General Theory". More specifically, neo-keynesian models accepted and adopted for the most part the core of RBC models. According to Lucas, macroeconomic models must rest on microfoundations, the explicit modelling of microeconomic behavior, to be consistent. This is the so-called Lucas critique, which states that a past relationship between two economic variables cannot be used in formulating policies for the future because economic agents change their behavior accordingly once policy makers try to exploit such a relationship. Macroeconomic models that explicitly model the microeconomic behavior of agents, on the other hand, supposedly do not fall into such a trap because the response of agents to a change in economic policy is baked into the model (Lucas, 1976). However, here lies the big problem. What if the micro foundations are flawed in the first place? If the assumption of completely rational and foresighted economic agents does not hold, if markets display market power to various degrees and do not adhere to the abstract of perfect competition, then micro founded models will be just as wrong despite being internally consistent in terms of their mathematical statements about the world. In the words of DeLong (2014): "...models with micro foundations are not of use in understanding the real economy unless you have the micro foundations right. And if you have the micro foundations wrong, all you have done is impose restrictions on yourself that prevent you from accurately fitting reality."

Neo-keynesian macroeconomics took the core RBC models on board, mostly because of their mathematical elegance one suspects, and added one ad-hoc assumption after the other in order to make the models fit the data. Instead of prices being perfectly flexible, neo-keynesians introduced wage stickiness in the labor market and price stickiness in product markets (Woodford, 2009). While there is overwhelming empirical evidence that both prices and wages are sticky in the short to medium run (Bernanke and Carey, 1996), these nominal rigidities were introduced on a very ad-hoc basis in the neo-keynesian model. "Calvo pricing" introduced staggered prices. The so-called "Calvo fairy" simply imposes exogenous price changes that follow a well-behaved probability distribution (Calvo, 1983). These small nominal rigidities are enough to create nominal demand shocks in the model, which leave room for government interventions, namely countercyclical demand management by monetary and fiscal authorities. While this is an improvement over RBC models, it was pretty much a false victory because it was achieved using ad-hoc assumptions that have little basis in reality. These and other false victories have been the main achievement of neo-keynesian economics over the last couple of decades as the following examples will illustrate.

The core neo-keynesian model also ignores the role of credit, for example. One solution was to simply introduce a "financial accelerator" à la Bernanke, which amplifies an initial shock to the economy by also changing credit conditions as the value of the underlying collateral against which credit is made available fluctuates throughout the business cycle (Bernanke et al., 1999). Alternatively, Eggertson and Krugman (2012) move away from the "representative agent" framework since not all economic agents are equal (this feels like a truism). Instead, they simply assume that a subset of individuals is credit-constrained.

Similarly, most macroeconomic models do not contain the financial sector. This turned out to be an important shortcoming in the light of the Global Financial Crisis. Again, this problem was fixed by simply introducing some kind of financial friction in the model that allows deviations from perfectly functioning financial markets (Hall, 2010).

All these previous examples illustrate that modern macroeconomics shares some key features of a degenerative research program as defined by Lakatos et al. (1980). Instead of refuting and replacing the core foundations, which seem to be flawed, more and more auxiliary hypotheses are added to protect the research agenda. Increasing the number of ad-hoc assumptions increases the complexity of the model, but it does not necessarily make the model a better fit.

An alternative set of models?

The natural question we have to ask ourselves is what should be done about all of this? How can we push the field of macroeconomics in the right direction, given that we adhere to the belief that the current paradigm is flawed in one way or the other.

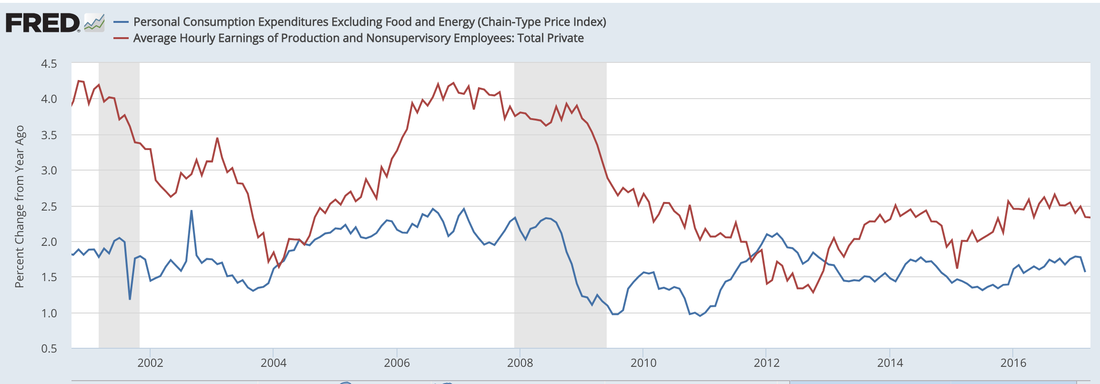

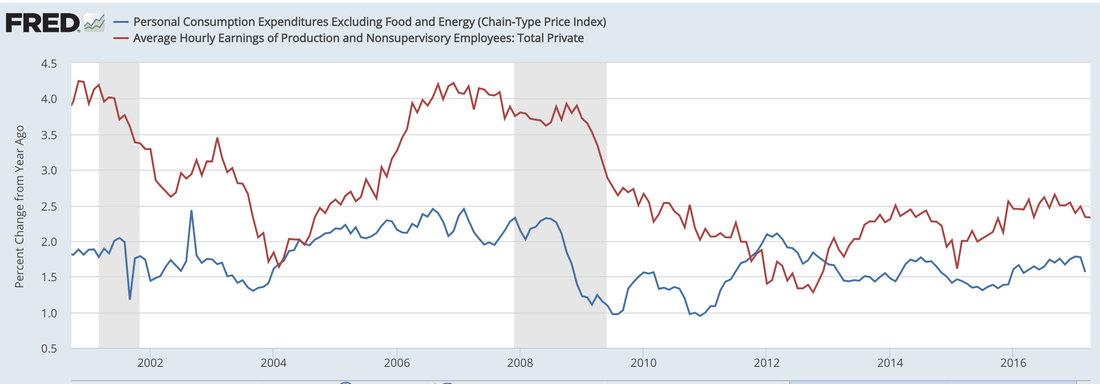

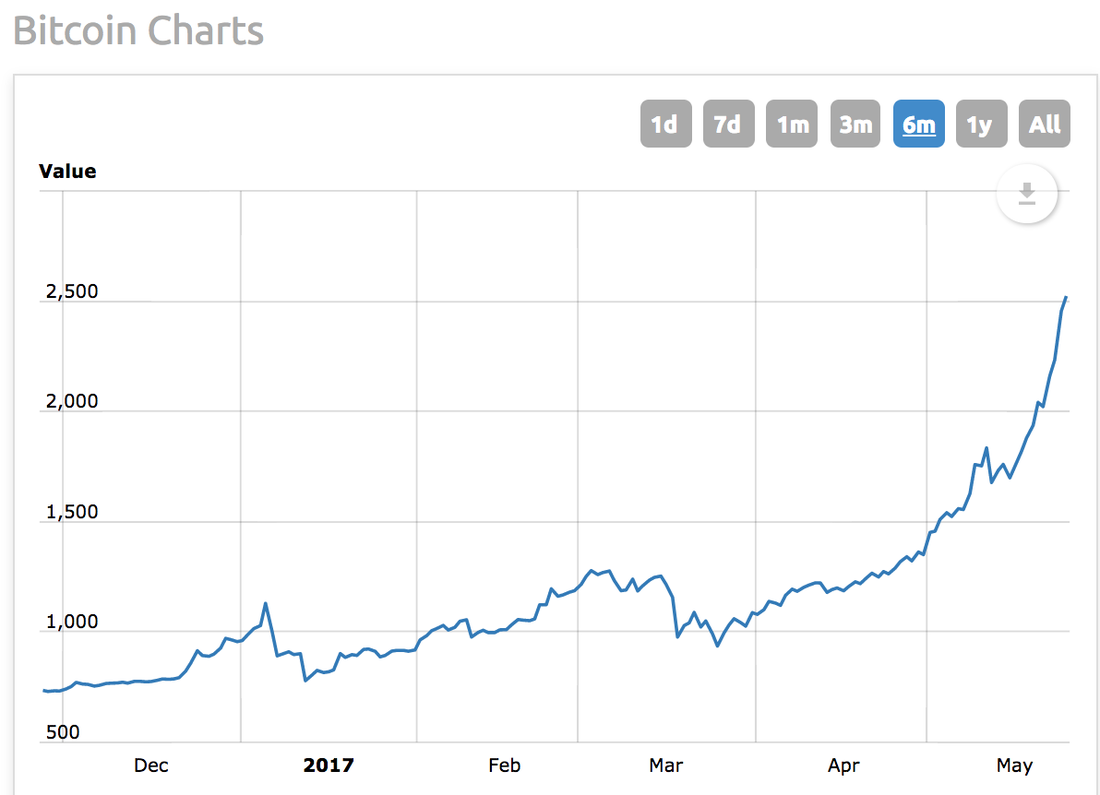

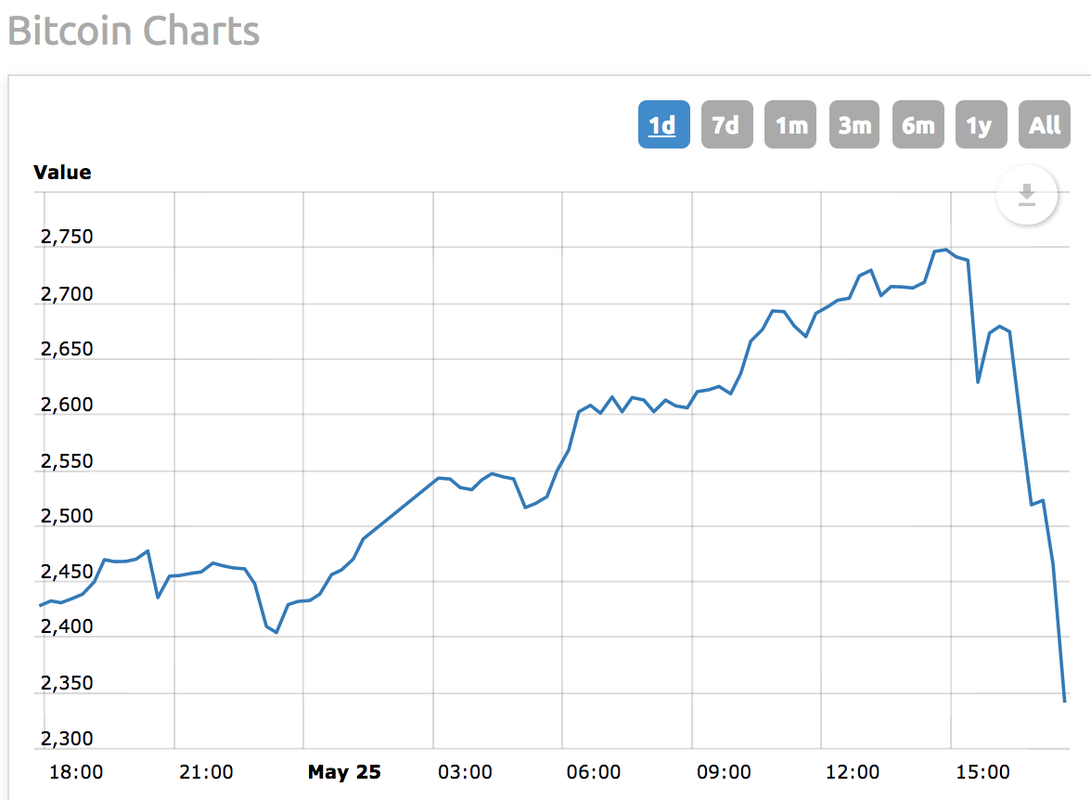

Again, Roger Farmer's (2016) book "Prosperity for all" might provide the answer. Farmer introduces a new macroeconomic model, which eliminates one of the key assumptions of modern macroeconomics, the so-called natural rate hypothesis. This concept was originally introduced by Friedman (1977). Most macroeconomic models assume that there is one single macroeconomic equilibrium. Price stability, in general defined as 2% inflation, is associated with one unique unemployment rate. Pushing unemployment rate below the natural level leads to higher inflation whereas a unemployment exceeding the natural rate leads to lower inflation. Farmer (2016) discards the idea that there is one unique macroeconomic equilibrium, a concept that rests on a number of extremely strong assumptions, which are unlikely to hold in reality. Instead, he introduces a model with multiple equilibria in which a particular inflation rate can be associated with an entire range of different unemployment rates. As an example, the Fed estimated in 2012 a few years after the Global Financial Crisis that the natural rate of unemployment might be at about 6.5%. This was at a time when actual unemployment was still above 8% (Board of Governors of the Federal Reserve System, 2012). As of May 2017, the Fed pushed down the unemployment rate to 4.4% and inflation is still undershooting the 2% inflation target. One interpretation of current events is that the Fed simply underestimated the natural rate back in 2012 by a big margin. However, a more plausible interpretation of the data is that an entire range of unemployment rates consistent with price stability. Accordingly, a more expansionary policy right after the financial crisis could have pushed down unemployment faster without increasing price pressures. The concept of multiple equilibria has so far found more endorsement in international macroeconomics. There is an abundant literature on how currency crises can be caused by speculative attacks that have a self-fulfilling nature such as in Obstfeld (1996) and Krugman (1996), for example. Any story that involves Keynesian self-fulfilling prophecies (Keynes, 1937) naturally evolve from a model with multiple equilibria. On the domestic front, however, most macroeconomic models adhere to the story of a unique macroeconomic equilibrium, most likely because such models are more tractable and easier to handle.

An alternative route might be the introduction of chaos theory into macroeconomics. Given that the economy is a complex dynamic system where millions of consumers and firms interact with each other on a daily basis, one might find it surprising that most macroeconomic models contain a single equilibrium and that most variables are modelled in terms of linear systems of equations. While such linear systems might describe the economy well in times of little perturbation, it is unclear whether such a model does equally well in times of crisis. The failure of Lehman Brothers in the fall of 2008 turned out to be a huge shock to the financial system. While early estimates suggested that the economy would contract by less than 4% in the last quarter of 2008, the true figure, however, turned out to be almost negative 9%. The economy literally went into free fall.

In a dynamic system, small changes in initial conditions compound over time and can lead to dramatic different outcomes, the proverbial butterfly wings that cause a hurricane. The weather is such a complex and dynamic system. As a result, meteorologists use relatively complex dynamic models, which try to predict the weather in the days and weeks ahead. However, small changes in input data can lead to rough weather conditions such as storms in one simulation while the next simulation predicts calm weather instead. This is due to the fact that in a dynamic system small mistakes compound over time and thus can lead to extremely large forecast errors. Nevertheless, Nate Silver's (2012) fascinating book "The signal and the noise" describes how weather forecasts have become increasingly accurate over the last few decades, at least at short time horizon (1-2 weeks ahead). Hurricane and storm forecasts have also become much better over time. Meteorologists were very much aware days in advance that Katrina would hit the coast close to New Orleans. Theoretically, the disaster could have been averted with better evacuation management. However, the edge that weather forecasters have over economists is that the data at hand is much better. Cloud formation can be studied using satellite data. Land and oceanic temperatures as well as atmospheric pressures are measured with high accuracy.

Economic data, on the other hand, is much more fuzzy. Some optimists thought that big data would bring about a new era in which economic forecasts would become more and more accurate. However, so far this has not happened yet. Instead, having access to more and more economic data might actually rather cloud the picture instead of revealing some useful information. A common problem with time series data is that of spurious regressions. From a statistical point of view, it has been found that butter production in Bangladesh can explain 75% of the variation in the S&P 500 in the 1990s. The statistical fit is increased to an astonishing 99% if you include U.S. cheese production and Bangladesh sheep production (Leinweber, 2007). Of course, common reason suggests that this is just a strange statistical anomaly. Those three variables should in no way explain movements in U.S. stock prices. One of the concerns with big data is that instead of finding true relationships, one will only find more and more spurious regressions. As a consequence, economists are no closer to predicting recessions. Indeed, economists are even struggling to forecast current growth rates adequately. As an example, U.S. GDP figures for the first quarter of 2017 are only released by the middle of April. Over the course of the following three months, these figures are revised another two times. Moreover, these revisions turn out to be quite substantial. There is, on average, more than a percentage point deviation from the initial estimate to the final release (Fixler et al. 2011).

There is a chance that bailing out Lehman Brothers with a few dozen billion dollars in the fall of 2008 could have prevented the subsequent downward spiral, which wiped out trillions of dollars in wealth as stock and housing markets crashed around the world. Furthermore, millions of Americans suddenly found themselves without a job as U.S. GDP contracted by almost a trillion dollars within a short time span. Chaos theory predicts that a small change in initial conditions, a Lehman bailout compared to no bailout, might have mitigated or even prevented the Great Recession altogether. Ultimately, chaos theory has not found any application in macroeconomics so far because the data is much more fuzzy and of questionable accuracy compared to meteorology, for example. Since macroeconomic data problems are unlikely to be resolved any time soon, chaos theory will most likely turn out to be a dead end in this field.

Not everything is lost: Current developments in the field

Ricardo Reis (2017) offers a vehement defense of modern macroeconomics and explains how some critique simply does not hold up under closer scrutiny. More specifically, current developments in the field have nothing to do with some of the overgeneralizations that some critics would have us believe. His first line of defense is that macroeconomics simply cannot deliver in terms of forecasts and should not expected to do so. Forecasting recessions is simply a fool's game given data problems, inherent uncertainty, actions and reactions by policy makers and economic agents, etc. According to the newspaper The Economist (2016), there were 220 instances when a year of positive growth was followed by a year of negative growth across all major countries over the past several decades. In its April forecast during the year of positive growth, the IMF was unable to predict a coming recession on a single occasion. A recent example of a failed forecast is related to Brexit. The Bank of England (BoE) got a lot of beating because it predicted an economic slowdown in the aftermath of the Brexit vote whereas in reality economic growth accelerated, at least for a short time span.

However, as a response to the "leave" vote and their own internal pessimistic forecast, the BoE quickly lowered interest rates and introduced a new asset purchase program (Quantitative Easing), which had the intended effect of counteracting any negative short-run effects. So basically what happened is that the BoE made their own pessimistic forecast irrelevant by acting quickly and decisively in the aftermath of the vote outcome.

Macroeconomics should not be evaluated in terms of its forecasts, but instead in terms of recession prevention and macroeconomic outcomes (Reis, 2017). The Great Moderation has been an extraordinary era of low inflation and economic stability in advanced economies. While some attribute it to luck (Ahmed et al., 2004), it seems that Central Banks have become somewhat more competent in managing the macroeconomy. Stock markets crashed around the world on Black Monday, wiping out trillions of dollars in assets. Regardless, economic activity was not really affected as the Greenspan Fed acted decisively to mitigate any harm that such crash could have on the financial system and the real economy. Similarly, the spectacular failure of the over-leveraged hedge fund Long-term Capital Management in the wake of the Asian financial crisis also did not cause a meltdown in the financial system because the New York Fed quickly engineered a bailout with a consortium of private banks (Lowenstein, 2000). According to Reis (2017), recent years have reaffirmed that Central Bankers as managers of the macroeconomy have performed reasonably well. However, this is a claim I cannot wholeheartedly endorse. While it is true that a second Great Depression was avoided thanks to so-called unconventional measures like bank bailouts and Quantitative Easing, it took the Fed after all 10 years to return to full employment. Developments in the Eurozone were even more dire as the ECB caused a double-dip recession by hiking interest rates prematurely in 2011. Furthermore, fiscal policy makers implemented harsh austerity measures, which exacerbated the downturn. Note, however, that most of these policy mistakes were made against the advice of many prominent academics who warned about the length and the severity of the economic downturn well in advance.

The current consensus in macroeconomics is that the 2% inflation target has outlived its use in the current low-growth and low-interest rate environment, also dubbed "secular stagnation" (Summers, 2014). DeLong and Summers already warned back in the 1990s that a low inflation target could increase the risk of severe recessions as it raises the probability of zero-lower bound episodes (DeLong and Summers, 1992), a warning that anticipated the problems Central Banks have been facing in recent years. Macroeconomic research suggests that Central Banks should either adopt a higher inflation target, maybe 4% (Ball, 2014), or implement a level target either for nominal GDP or prices (Svensson, 1996). A full decade after the Great Recession, Central Bankers are finally coming around, moving more and more towards this new consensus that has emerged in the aftermath of the Global Financial Crisis (Williams, 2016). Nevertheless, Central Banks are very conservative and rigid institutions and it will take time until policy makers have adopted to the new economic realities.

Last but not least, Reis (2017) argues that the latest macroeconomic research is actually very different from the proverbial straw. A lot of job market papers are about empirical research. Furthermore, insofar as theoretical models are used and applied, they are very different from the representative agents, perfect foresight, and rational expectations framework.

To sum up:

Modern macroeconomic theory took a gigantic wrong turn in the 1980s. Friedman (1953) argues that "...theory is to be judged by the class of phenomena which it is intended to explain". Using this criterion, RBC has clearly failed the test. Advocates of RBC models would have us believe in the perfection of markets, in the inefficacy of government interventions and macroeconomic stabilization tools, in unemployment simply being an optimal and voluntary response to negative shocks, and many other fairy tales. The neo-keynesian revolution took over the core assumptions of the RBC model and added one minor twist after the other, ranging from sticky wages and prices to financial frictions, in order to make the model somewhat more keynesian in spirit. It is revealing that many professional forecasters of macroeconomic data do not even rely on neo-keynesian models because they tend to have little predictive power. In that sense, modern DSGE models are failing the market test (Smith, 2014). This "bastard keynesianism" as Roger Farmer (2016) has dubbed it, however, bears little resemblance to many of the ideas Keynes originally proposed in his "General Theory" (1937). Accordingly, Farmer (2016) calls for a new set of models. So far, the profession has been somewhat slow and reluctant to change. On the other hand, a lot of new macroecomomic research in the aftermath of the Great Recession has been more empirical and more applied instead of simply more or less senseless DSGE-modelling without empirical falsification of any of the existing models out there. Apparently it took an earthquake like the Global Financial Crisis to shake up the profession. However, only time will tell whether this truly constitutes a paradigm shift or whether there will be business as usual in the years to come.

References:

- Ahmed, Shaghil, Andrew Levin, and Beth Anne Wilson. "Recent US macroeconomic stability: good policies, good practices, or good luck?." Review of economics and statistics 86, no. 3 (2004): 824-832.

- Ball, Laurence. "The case for a long-run inflation target of four percent." (2014).

- Bernanke, Ben S., Mark Gertler, and Simon Gilchrist. "The financial accelerator in a quantitative business cycle framework." Handbook of macroeconomics 1 (1999): 1341-1393.

- Bernanke, Ben S., and Kevin Carey. "Nominal wage stickiness and aggregate supply in the Great Depression." The Quarterly Journal of Economics 111, no. 3 (1996): 853-883.

- Calvo, Guillermo A. "Staggered prices in a utility-maximizing framework." Journal of monetary Economics 12, no. 3 (1983): 383-398).

- DeLong, Brad. "“Microfoundations”: I Do Not Think That Word Means What You Think It Means: Wednesday Focus." Equitable Growth. June 23, 2014. Accessed May 04, 2017. http://equitablegrowth.org/equitablog/microfoundations-i-do-not-think-that-word-means-what-you-think-it-means-wednesday-focus/.

- De Long, J. Bradford, and Lawrence H. Summers. "Macroeconomic policy and long-run growth." Economic Review-Federal Reserve Bank of Kansas City 77, no. 4 (1992): 5.

- Eggertsson, Gauti B., and Paul Krugman. "Debt, deleveraging, and the liquidity trap: A Fisher-Minsky-Koo approach." The Quarterly Journal of Economics 127, no. 3 (2012): 1469-1513

- Farmer, R. E. (2016). Prosperity for All: How to Prevent Financial Crises. Oxford University Press.

- "Federal Reserve issues FOMC statement." Board of Governors of the Federal Reserve System. December 12, 2012. Accessed May 04, 2017. https://www.federalreserve.gov/newsevents/pressreleases/monetary20121212a.htm.

- Fixler, Dennis J., Ryan Greenaway-McGrevy, and Bruce T. Grimm. "Revisions to GDP, GDI, and their major components." Survey of Current Business 91, no. 7 (2011): 9-31.

- Friedman, Milton. "The methodology of positive economics." (1953): 3-43.

- Friedman, Milton. "Nobel lecture: inflation and unemployment." Journal of political economy 85, no. 3 (1977): 451-472.

- Friedman, Milton, and Anna J. Schwartz. "A Monetary History of the United States, 1867–1960." NBER Books (1963).

- Hall, Robert E. "Why does the economy fall to pieces after a financial crisis?." The Journal of Economic Perspectives 24, no. 4 (2010): 3-20.

- Keynes, J. M. (1937). The general theory of employment. The quarterly journal of economics, 51(2), 209-223.

- Krugman, Paul. "Are currency crises self-fulfilling?." NBER Macroeconomics annual 11 (1996): 345-378.

- Krugman, Paul. "How did economists get it so wrong?." New York Times 2, no. 9 (2009): 2009.

- Kuhn, Thomas S. The Copernican revolution: Planetary astronomy in the development of Western thought. Vol. 16. Harvard University Press, 1957.

- Lakatos, Imre, John Worrall, and Gregory Currie. The methodology of scientific research programmes: Volume 1: Philosophical papers. Vol. 1. Cambridge University Press, 1980.

- Leinweber, David J. "Stupid data miner tricks: overfitting the S&P 500." The Journal of Investing 16, no. 1 (2007): 15-22.

- Lowenstein, Roger. When genius failed: the rise and fall of Long-Term Capital Management. Random House trade paperbacks, 2000.

- Lucas, Robert E. "Econometric policy evaluation: A critique." In Carnegie-Rochester conference series on public policy, vol. 1, pp. 19-46. North-Holland, 1976.

- Obstfeld, Maurice. "Models of currency crises with self-fulfilling features." European economic review 40, no. 3 (1996): 1037-1047.

- Popper, Karl. "Science: Conjectures and refutations." The philosophy of science: an historical anthology 471 (2009).

- Reis, Ricardo. "Is something really wrong with macroeconomics?." (2017).

- Romer, Christina D., and David H. Romer. "A new measure of monetary shocks: Derivation and implications." The American Economic Review 94, no. 4 (2004): 1055-1084.

- Silver, Nate. The signal and the noise: Why so many predictions fail-but some don't. Penguin, 2012.

- Smith, Noah. "Noahpinion." The most damning critique of DSGE. January 10, 2014. Accessed May 23, 2017. http://noahpinionblog.blogspot.se/2014/01/the-most-damning-critique-of-dsge.html.

- Summers, Lawrence H. "16 Some skeptical observations on real business cycle theory." A Macroeconomics Reader (2002): 389.

- Summers, Lawrence H. "US economic prospects: Secular stagnation, hysteresis, and the zero lower bound." Business Economics 49, no. 2 (2014): 65-73.

- Svensson, Lars EO. Price Level Targeting vs. Inflation Targeting: A Free Lunch?. No. w5719. National bureau of economic research, 1996.

- "A mean feat." The Economist. January 09, 2016. Accessed May 10, 2017. http://www.economist.com/node/21685480.

- Williams, John C. "Monetary policy in a low R-star world." FRBSF Economic Letter 23 (2016).

- Woodford, Michael. "Convergence in macroeconomics: elements of the new synthesis." American economic journal: macroeconomics 1, no. 1 (2009): 267-279.

RSS Feed

RSS Feed